Read more of this story at Slashdot.

Read more of this story at Slashdot.

At Software Verify we’ve started to phase out the use of booleans in our code.

The use of booleans hinders readability and maintainability of the code.

The use of booleans also allow potential unwanted parameter reordering mistakes to occur.

Some bold claims. I will explain.

Problem 1: Readability and maintainability

If you saw this function call in your code what do you think it would do?

ok = attachExceptionTracerToRunningProcess(targetProcessId, TRUE, FALSE, _T("d:\\Exception Tracer"));

To find out we’d need to look at the function prototype, or the documentation. Most likely you’re is going to right click in Visual Studio and ask to see the function definition, or a tooltip is going to tell you what the argument names are.

Here’s the function definition:

int attachExceptionTracerToRunningProcess(const DWORD processId,

const bool processBitDepth,

const bool singleStep,

const TCHAR* dir);

To use this function you need to pass a process id, a directory path and two boolean values.

We can guess that passing TRUE for singleStep will start Exception Tracer in single stepping mode. But that’s implied, it’s not explicit.

But processBitDepth, what does that do? You’ll need to read the documentation for that : FALSE starts 32 bit Exception Tracer, and TRUE starts 64 bit Exception Tracer.

Reading documentation is not a problem, but it doesn’t make your code very readable does it? It slows things down.

Swap booleans for enumerations

If we swap the bools for typedef’d enumerations then we get something more readable for the function definition:

typedef enum _processBitDepth

{

PROCESS_BIT_DEPTH_32,

PROCESS_BIT_DEPTH_64,

} PROCESS_BIT_DEPTH;

typedef enum _doSingleStep

{

DO_SINGLE_STEP_NO,

DO_SINGLE_STEP_YES,

} DO_SINGLE_STEP;

int attachExceptionTracerToRunningProcess(const DWORD processId,

const PROCESS_BIT_DEPTH processBitDepth,

const DO_SINGLE_STEP singleStep,

const TCHAR* dir);

Now the function call becomes:

ok = attachExceptionTracerToRunningProcess(targetProcessId, PROCESS_BIT_DEPTH_64, DO_SINGLE_STEP_NO, _T("d:\\Exception Tracer"));

and the purpose of the function arguments is explicit rather than implied.

Different styles

You don’t need to just use YES and NO, you can use what ever nomenclature feels correct for the situation:

typedef enum _doSingleStep

{

DO_SINGLE_STEP_NO,

DO_SINGLE_STEP_YES,

} DO_SINGLE_STEP;

typedef enum _doSingleStep

{

DO_SINGLE_STEP_OFF,

DO_SINGLE_STEP_ON,

} DO_SINGLE_STEP;

typedef enum _doSingleStep

{

DO_SINGLE_STEP_DISABLE,

DO_SINGLE_STEP_ENABLE,

} DO_SINGLE_STEP;

typedef enum _doSingleStep

{

DO_SINGLE_STEP_STOP,

DO_SINGLE_STEP_GO,

} DO_SINGLE_STEP;

If you really feel TRUE/FALSE are appropriate you can do that as well. Which seems a bit odd, until you see Problem 2 and Problem 3.

typedef enum _doSingleStep

{

DO_SINGLE_STEP_FALSE,

DO_SINGLE_STEP_TRUE,

} DO_SINGLE_STEP;

Problem 2: Implicit casting

If you are using bools, many types (char, int, enumerations, etc) can implicitly convert to them without you needing to use a cast statement. This can be convenient, but it’s also an avenue for bugs.

You really want to be explicit about any type conversions.

If you’re using typedef’d enumerations, these type conversions fail to compile.

Problem 3: Unwanted parameter reordering

If you are passing parameters through multiple functions before they finally reach the intended function it’s possible that a future edit (such as refactoring a function’s parameters) may reorder some parameters and a mistake is made during the updating of calls to the refactored function which results in parameters passed to the new function definition have been unintentionally reordered.

This happened to us with some boolean parameters in our code instrumentation library. We discovered the mistake when we switched to using enumerations because now each of these parameters has it’s own type.

Incorrect, but compiles

With the old function definition, this example will compile, but the parameters have been incorrectly switched, leading to incorrect behaviour.

int attachExceptionTracerToRunningProcess(const DWORD processId,

const bool processBitDepth,

const bool singleStep,

const TCHAR* dir);

int doStartup(const DWORD processId,

const bool processBitDepth,

const bool singleStep,

const TCHAR* dir)

{

int ok;

ok = attachExceptionTracerToRunningProcess(processId, singleStep, processBitDepth, _T("d:\\Exception Tracer"));

}

Incorrect, but fails to compile

With the new function definition, this example will fail to compile.

int attachExceptionTracerToRunningProcess(const DWORD processId,

const PROCESS_BIT_DEPTH processBitDepth,

const DO_SINGLE_STEP singleStep,

const TCHAR* dir);

int doStartup(const DWORD processId,

const PROCESS_BIT_DEPTH processBitDepth,

const DO_SINGLE_STEP singleStep,

const TCHAR* dir)

{

int ok;

ok = attachExceptionTracerToRunningProcess(processId, singleStep, processBitDepth, _T("d:\\Exception Tracer"));

}

Making the change

It’s a bit more work to create the enumeration and use it.

With an existing code base it can be time consuming work depending on the number of occurrences of the new enumerated type in your code base.

One type at a time

If you are going to make this change I would recommend changing one parameter type at a time and doing a build after each type change.

This seems more time consuming, but in practice we’ve found it’s easier than making multiple new types and then trying to build and dealing with the chaos that results until you’ve fixed up all the function definitions etc.

If, like us, you’ve got many solutions and many projects in each solution then the quickest way to do these builds is to load the solutions/projects into Visual Studio Project Builder then build all projects of a specific configuration. This saves a lot of time.

Should we change our whole codebase?

There are certainly benefits to doing this. You might find some errors where parameters have been passed in the wrong order.

But for large codebases, changing every boolean use is probably impractical (it will take a long time). The best approach is to change them when you’re editing some code that is using them.

Conclusion

As you can see there are multiple benefits to using enumerations rather than booleans in your code.

You get improved readability and improved type safety.

The post Stop using booleans, they’re hurting your code appeared first on Software Verify.

Read more of this story at Slashdot.

Read more of this story at Slashdot.

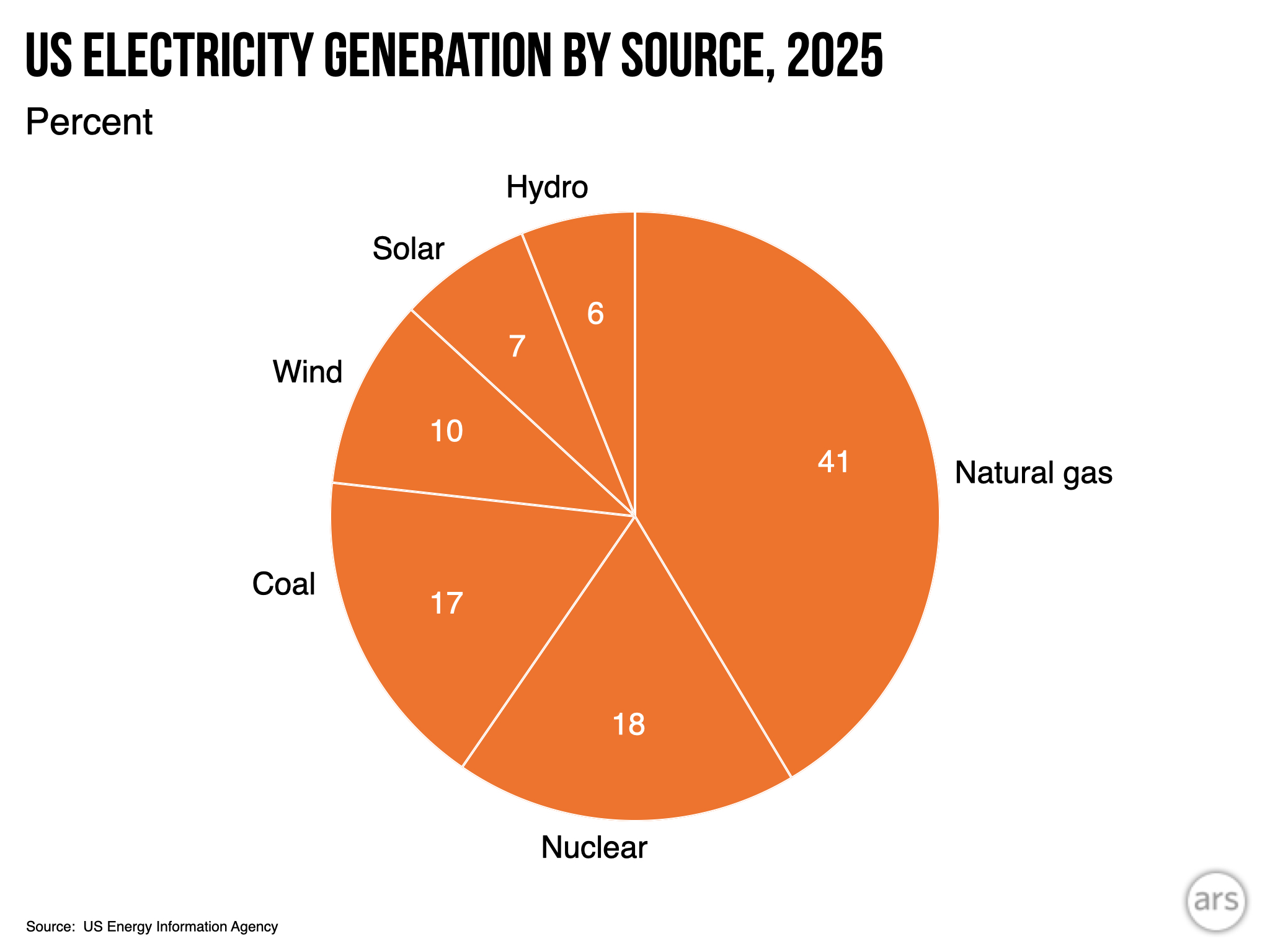

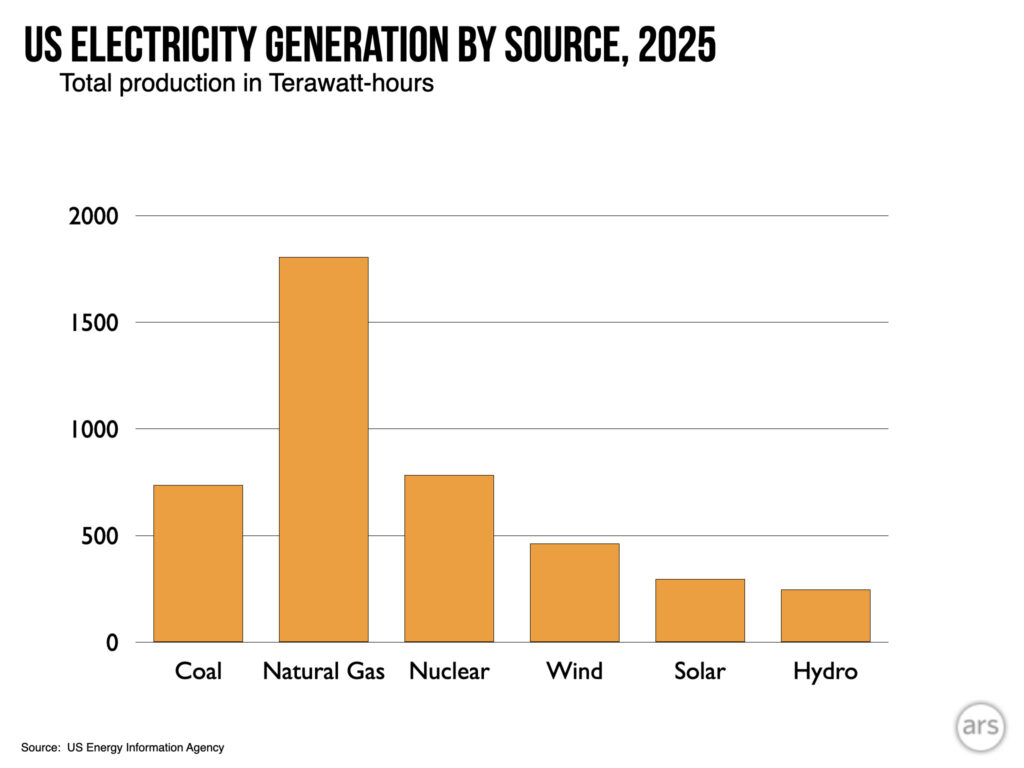

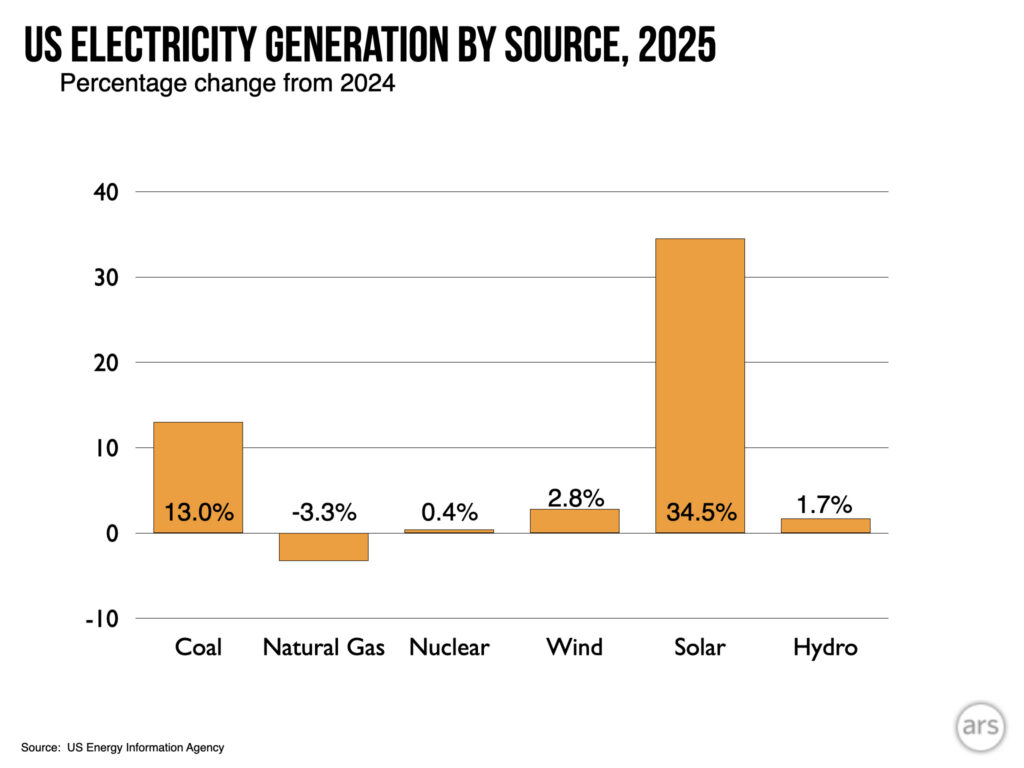

On Tuesday, the US Energy Information Administration released full-year data on how the country generated electricity in 2025. It's a bit of a good news/bad news situation. The bad news is that overall demand rose appreciably, and a fair chunk of that was met by additional coal use. On the good side, solar continued its run of astonishing growth, generating 35 percent more power than a year earlier and surpassing hydroelectric power for the first time.

Shifting markets

Overall, electrical consumption in the US rose by 2.8 percent, or about 121 terawatt-hours. Consumption had been largely flat for several decades, with efficiency and the decline of industry offsetting the effects of population and economic growth. There were plenty of year-to-year changes, however, driven by factors ranging from heating and cooling demand to a global pandemic. Given that history, the growth in demand in 2025 is a bit concerning, but it's not yet a clear signal that the factors that will inevitably drive growth have kicked in.

(These factors include things like the switch to heat pumps, the electrification of transportation, and the growth in data centers. While the first two of those involve a more efficient use of energy overall, they involve electricity replacing direct use of fossil fuels, and so will increase demand on the grid.)

The story of the year is how that demand was met. If demand grows more slowly, the additional 85 terawatt-hours generated by expanded utility-scale and small solar installations would have easily met it. As it was, the growth of utility-scale solar was only sufficient to cover about two-thirds of the rising demand (or 73 percent if you include wind power). With no new nuclear plants on the horizon, the alternative was to meet it with fossil fuels.

Hydropower has become the first energy source to be passed by solar power. It won't be the last.

Credit:

John Timmer

Hydropower has become the first energy source to be passed by solar power. It won't be the last.

Credit:

John Timmer

And the fossil fuel market has gotten increasingly complicated. In the recent past, every increase in demand would have been met by additional natural gas generation, given the abundance of local production. But high demand and increased tariffs have led to rising costs and long delays for the hardware that generates electricity by burning natural gas. President Trump also reversed Biden's block on new facilities that export natural gas in liquid form, meaning the local market is increasingly competing with international sales, raising prices.

Coal has thus been more economically viable than in years past, and electricity generated from burning it rose by 13 percent. Chris Wright, the secretary of energy, has also ordered a number of coal plants slated for closure to remain available. It's unclear whether many of them are generating power, since they wouldn't be slated for closure if demand could be met more economically without them operating. The administration has probably had a stronger impact on coal use by its promotion of natural gas exports.

The net result of the above policies? Coal, solar, and wind all produced more power in 2025. Demand rose by more than any of these sources did individually, but by less than their total increase—the excess went toward displacing natural gas.

What's coming next?

While the Trump administration has been hostile to renewable energy, there's only so much it can do to fight the economics. A recent analysis of planned projects indicates that the US will see another 43 GW of solar capacity added in 2026—far more than the 27 GW added in 2025. That will be joined by 12 GW of wind power, with over 10 percent of that coming from two of the offshore wind projects that the administration has repeatedly failed to block. The largest wind farm yet built in the US, a 3.6 GW monster in New Mexico, is also expected to begin operations in 2026.

That means wind and solar are well-positioned to outpace hydropower as they provide an ever-larger share of US electricity. It's also likely to keep them ahead of any increase in coal power that occurs next year. Combined with hydropower, the growth in wind and solar should push renewable energy to nearly a quarter of the US electricity mix, barring a massive increase in demand.

Another major factor that will boost renewables is the rapid expansion of battery storage, which reduces the risk that excess solar power goes to waste. Often, the two are co-located so that the batteries can use the same transmission infrastructure after solar stops producing. The EIA foresees 24 GW of new battery capacity being added to the grid, much of it being installed in California and Texas. Of the 6.3 GW of new natural gas generation expected in 2026, 2.8 GW are expected to be combustion turbines, which are often used to compensate for the variability of renewable power.

The US is near a key pivot point, with wind and solar nearly (but not quite) growing fast enough to offset a significant rise in demand, and is likely to reach that point within the next few years. And grid operators are building the sort of support facilities that will blend in well with a future dominated by renewables. Unfortunately, market dynamics are causing a rise in coal use that is likely to offset trends that would otherwise reduce our carbon emissions.